Overview

Using the Spark Server SDK, users can create new order types ("Algos"). In this case, users implement the new functionality in C++, producing a shared library that is loaded at run time by our Spark Server.

If you are interested in accessing Spark server remotely, please see the Spark Client SDK.

Speed

The Spark platform offers a sub-10 microsecond socket to socket response times for algorithmic trading. With the Spark Server SDK, you can create your order types in high-performance C++ and load them into the Spark server.

Since your order is running inside the Spark Server, there is virtually no overhead allowing your algorithm to take full advantage of the speed Spark offers. Hosting a high-performance language like C++ directly in the Spark server process allows it to respond to the market thousands of times faster than an Algo built using a proprietary drag and drop tool.

Easy to Get Started

If you are not actively developing with C++, it can be time-consuming to set up a complete development environment from scratch. That is why we provide a full development environment out of the box with Docker so you can download and try the Server SDK quickly and easily.

Docker works across Windows, macOS, and Linux, so you don't need to change your development environment to try the Spark API. Docker also ensures that all of the dependencies you need are packaged together in one image, so you know it will work out of the box, no matter the platform.

Implementation

Creating a new Algo begins with implementing a standard C++ class derived from IAlgoOrder. Start from the algo_template example to get going quickly.

The first call your new Algo receives is do_before_launch, which is an opportunity to validate parameters and allocate any long-lived resources needed during execution. Next, it receives either do_launch in the case of a new order or do_launch_attached if the order is attaching to an existing order.

The Algo receives a reference to IAlgoServices, which is how it calls out to the Spark system. It will use IAlgoServices to subscribe to market events, launch and control child orders, and communicate its state to the larger Spark system.

Fully Integrated

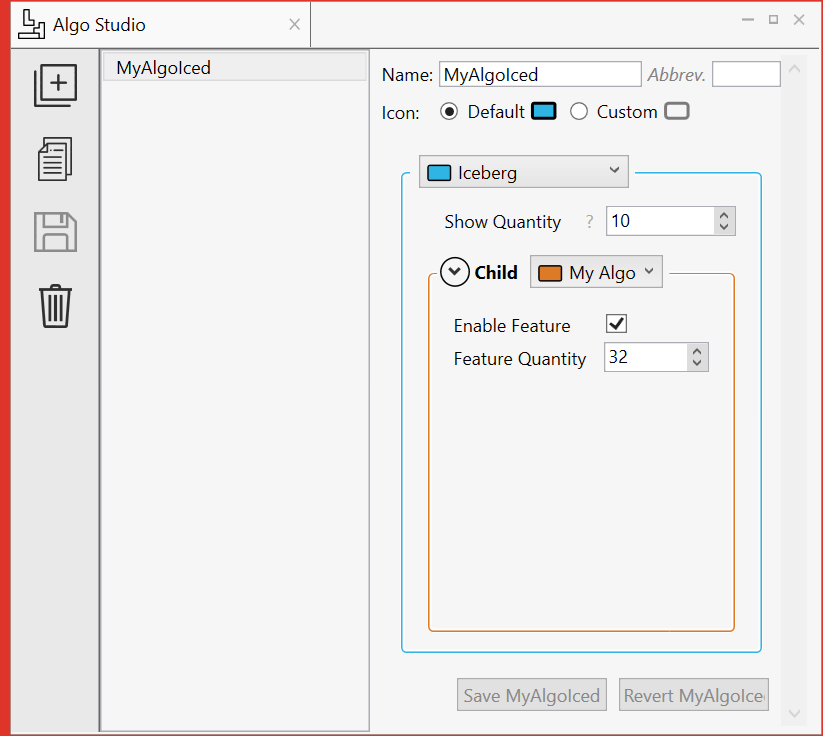

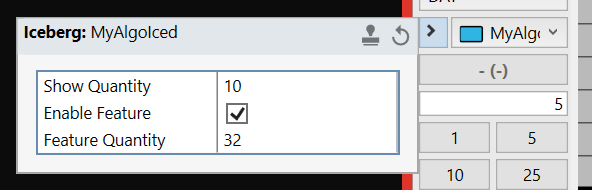

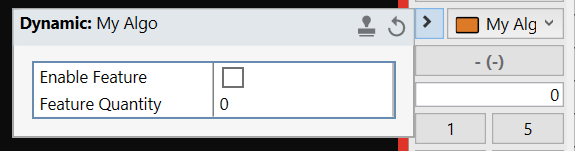

When a Spark Server plugin is loaded, it registers its exposed Algos with the Spark Server though the init_spark_plugin callback. This callback allows the Algo to describe the parameters it expects so they can be automatically entered in the Spark Client.

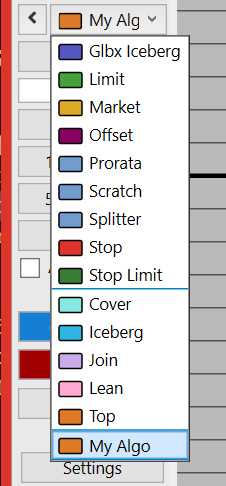

Compose your Algo with out of the box Algos in the Spark Algo Studio to create new functionality on the fly.